DRIFT

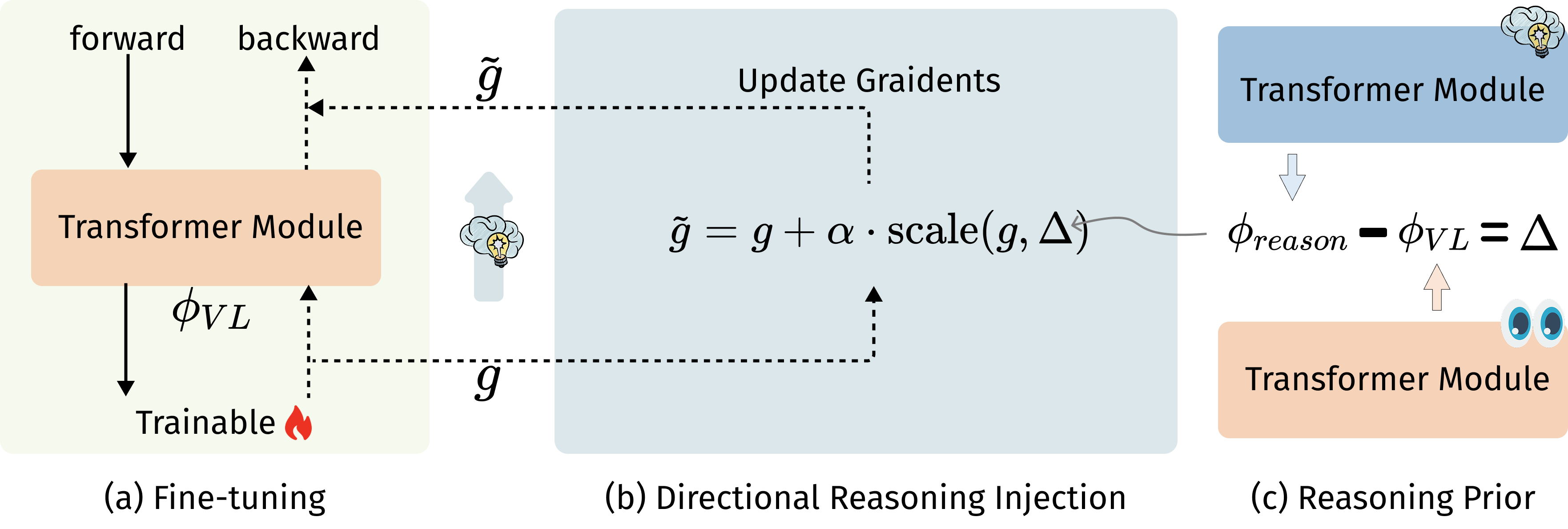

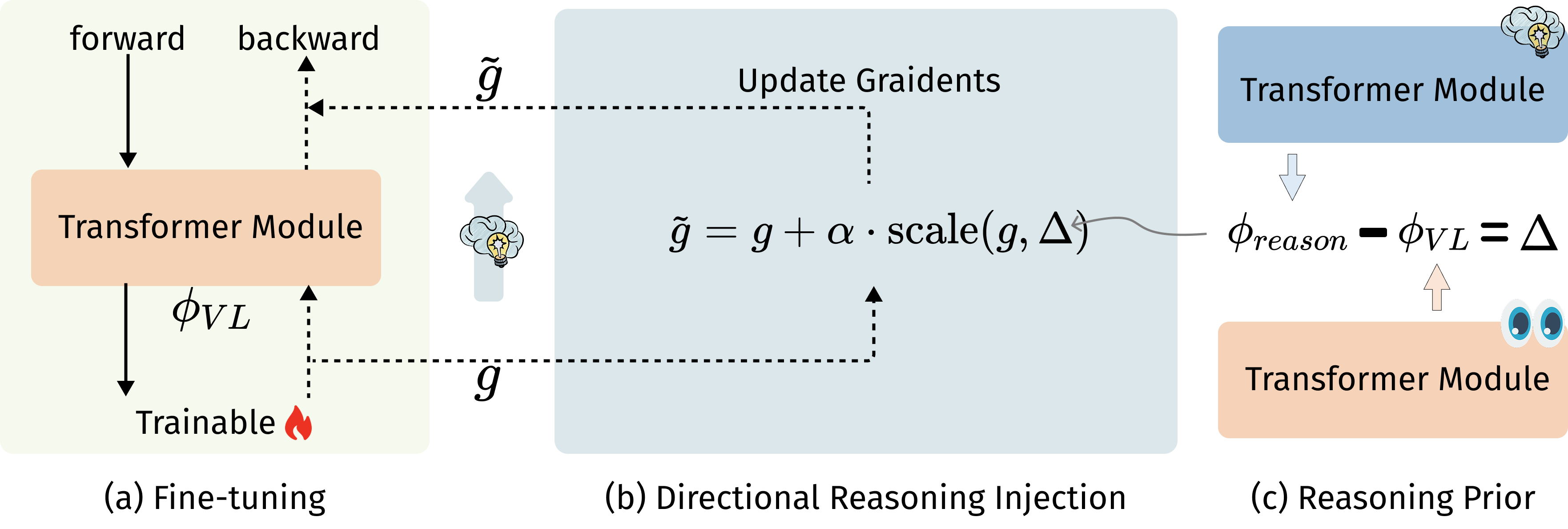

The first method to inject directional reasoning structure into MLLM fine-tuning. Instead of hoping reasoning emerges, we explicitly steer models toward structured, faithful cross-modal inference.

I teach machines to see, hear, and reason as one.

omni-modal LLMsmultimodal reasoningaudio-visual generation

Research Scientist, Tencent Hunyuan

I build multimodal systems that reason across vision, language, and sound — teaching large models to perceive, infer, and create across vision, language, and audio. My recent focus is on faithful cross-modal reasoning in LLMs and few-shot generation with diffusion priors. I value work that is measurable and deployable.

My research centers on omni-modal reasoning and generation, with a preference for leveraging pretrained foundation models (LLMs, diffusion) over task-specific architectures. I focus on problems where quality, and real-world scalability all matter.

Ph.D. Computer Science, University of Rochester · B.Eng, Nanjing University

A few projects that define what I care about.

The first method to inject directional reasoning structure into MLLM fine-tuning. Instead of hoping reasoning emerges, we explicitly steer models toward structured, faithful cross-modal inference.

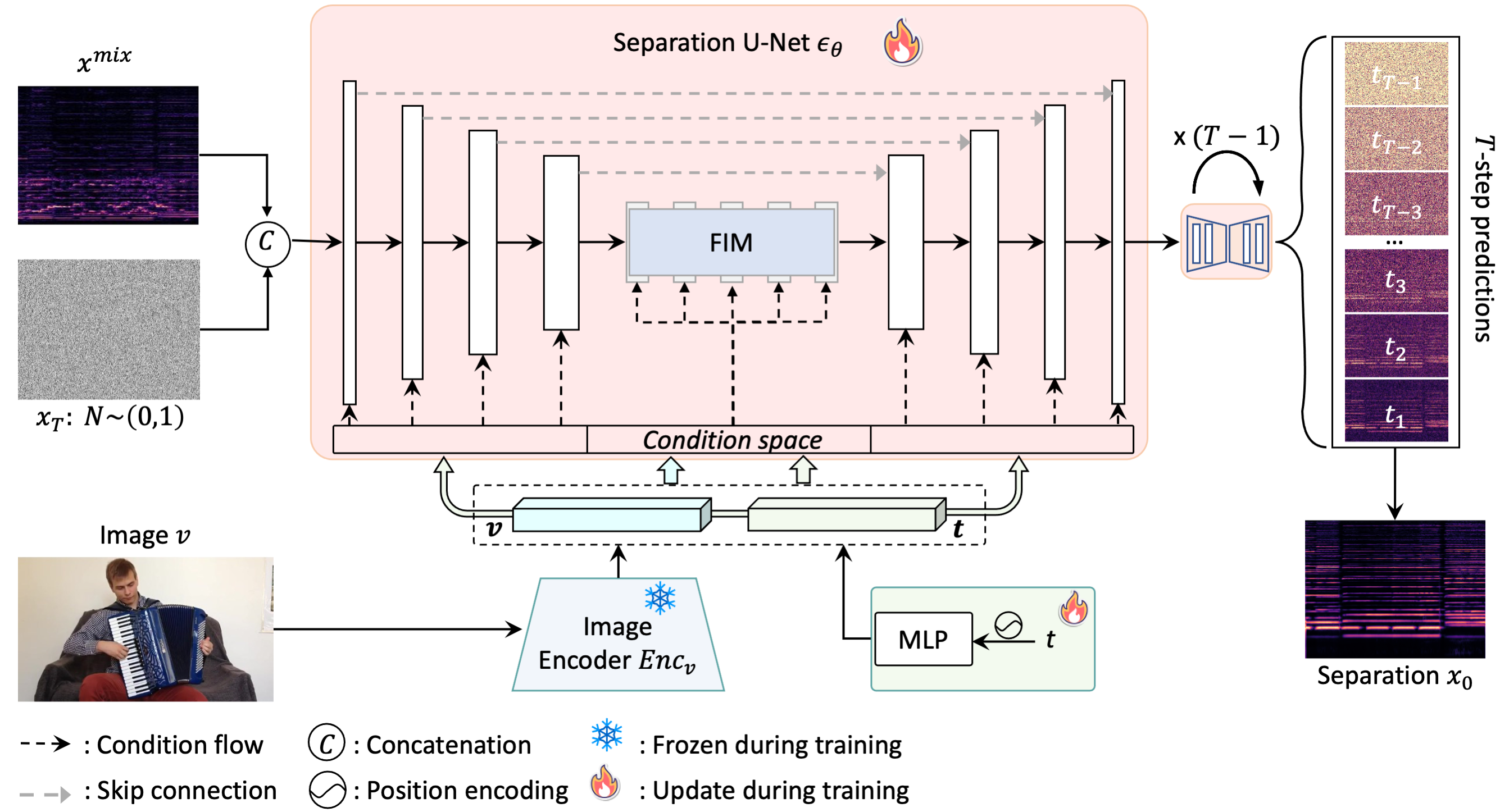

A zero-shot paradigm shift for audio separation: separate any sound category without ever training on it. Pretrained diffusion priors replace paired training data entirely.

The first generative diffusion approach to visually-guided sound separation, producing high-fidelity audio from diverse real-world mixtures. Best Paper Award at ACCV 2024.

20+ papers at NeurIPS, CVPR, ICCV, ECCV, ICLR, IJCV, and more.

Omni-modal LLM research and deployment at scale.

Multimodal reasoning and efficient adaptation of large language models. Led to DRIFT, accepted at ACL Findings 2026.

Audio-visual learning for cinematic audio highlighting and sound design. Resulted in a CVPR 2025 paper on audio highlighting.

Neural acoustic modeling and spatial audio for human body soundfields. Published at ECCV 2024.

3D point cloud processing and non-local denoising methods.